GPT-5 Mini vs Claude 4.1 Opus

Compare GPT-5 Mini and Claude 4.1 Opus. Find out which one is better for your use case.

Model Comparison

| Feature | GPT-5 Mini | Claude 4.1 Opus |

|---|---|---|

| Provider | OpenAI | Anthropic |

| Model Type | text | text |

| Context Window | 400,000 tokens | 1,000,000 tokens |

| Input Cost | $0.25 / 1M tokens | $15.00 / 1M tokens |

| Output Cost | $2.00 / 1M tokens | $75.00 / 1M tokens |

Strengths & Best Use Cases

GPT-5 Mini

1. High reasoning performance

- Retains strong reasoning capabilities despite being a smaller, faster model.

- Suitable for tasks requiring accurate logic and structured thinking.

2. Fast and cost-efficient

- Optimized for speed, making it ideal for real-time or high-volume workloads.

- Far cheaper than GPT-5 while maintaining solid capability.

3. Great for well-defined tasks

- Excels when prompts are precise and objectives are clearly specified.

- More predictable and stable for deterministic workflows.

4. Multimodal input

- Accepts text + image as input.

- Outputs text only.

5. Tool support

- Works with Web Search, File Search, Code Interpreter, MCP.

- (Does not support Image Generation as a tool and does not support Computer Use.)

Claude 4.1 Opus

1. Advanced Coding Performance

-

Achieves 74.5% on SWE-bench Verified, improving the Claude family's state-of-the-art coding abilities.

-

Stronger at:

- Multi-file code refactoring

- Large codebase debugging

- Pinpointing exact corrections without unnecessary edits

-

Outperforms Opus 4 and shows gains comparable to jumps seen in past major releases.

2. Improved Agentic & Research Capabilities

- Better at maintaining detail accuracy in long research tasks.

- Enhanced agentic search and step-by-step problem solving.

- Performs reliably across complex multi-turn reasoning tasks.

3. Validated by Real-World Users

- GitHub: Better multi-file refactoring and code adjustments.

- Rakuten Group: High precision debugging with minimal collateral changes.

- Windsurf: One standard deviation improvement on their junior dev benchmark—similar magnitude to Sonnet 3.7 → Sonnet 4.

4. Hybrid-Reasoning Benchmark Improvements

- Improvements across TAU-bench, GPQA Diamond, MMMLU, MMMU, AIME (with extended thinking).

- Stronger robustness in long-context reasoning tasks.

Turn your AI ideas into AI products with the right AI model

Appaca is the complete platform for building AI agents, automations, and customer-facing interfaces. No coding required.

Customer-facing Interface

Create and style user interfaces for your AI agents and tools easily according to your brand.

Multimodel LLMs

Create, manage, and deploy custom AI models for text, image, and audio - trained on your own knowledge base.

Agentic workflows and integrations

Create a workflow for your AI agents and tools to perform tasks and integrations with third-party services.

Trusted by incredible people at

All you need to launch and sell your AI products with the right AI model

Appaca provides out-of-the-box solutions your AI apps need.

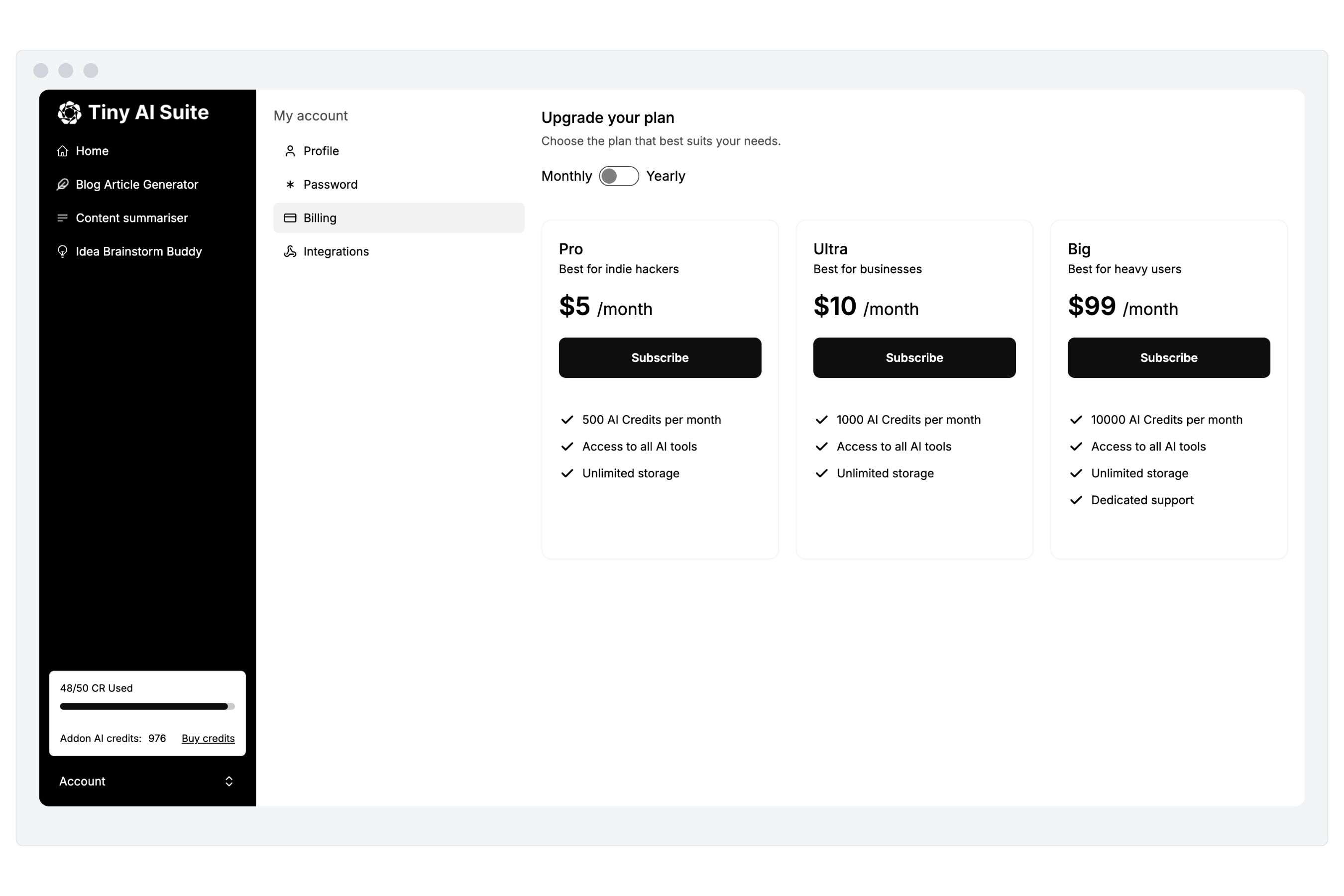

Monetize your AI

Sell your AI agents and tools as a complete product with subscription and AI credits billing. Generate revenue for your busienss.

“I've built with various AI tools and have found Appaca to be the most efficient and user-friendly solution.”

Cheyanne Carter

Founder & CEO, Edubuddy

Put your AI idea in front of your customers today

Use Appaca to build and launch your AI products in minutes.