GPT-OSS 120B vs Gemini 1.5 Pro

Compare GPT-OSS 120B and Gemini 1.5 Pro. Find out which one is better for your use case.

Model Comparison

| Feature | GPT-OSS 120B | Gemini 1.5 Pro |

|---|---|---|

| Provider | OpenAI | |

| Model Type | text | text |

| Context Window | 131,072 tokens | 1,000,000 tokens |

| Input Cost | $0.00 / 1M tokens | $3.50 / 1M tokens |

| Output Cost | $0.00 / 1M tokens | $7.00 / 1M tokens |

Strengths & Best Use Cases

GPT-OSS 120B

1. Most powerful open-weight model

- 117B parameters (5.1B active) while fitting on a single H100 GPU.

- High reasoning quality compared to other open models.

2. Apache 2.0 license

- Fully permissive, no copyleft or patent restrictions.

- Safe for commercial products, research, and redistribution.

3. Configurable reasoning effort

- Supports adjustable reasoning: low, medium, high.

- Lets developers balance latency vs. depth.

4. Full chain-of-thought access

- Unlike closed commercial models, this exposes complete reasoning traces.

- Useful for debugging, auditing, safety research, and transparency.

5. Fine-tunable

- Fully supports parameter fine-tuning.

- Can be adapted to domain-specific workflows and proprietary datasets.

6. Agentic capabilities

- Built-in function calling.

- Native support for web browsing, Python execution, and structured outputs.

- Ideal for open-source agents, full-stack automation, and developer tooling.

7. Tooling ecosystem support

- Compatible with Chat Completions, Responses API, Assistants, Realtime, Batch, and Fine-tuning endpoints.

- Supports Image Generation, Code Interpreter (via Python runtime), and more.

8. Open-source availability

- Downloadable on HuggingFace for local or on-prem deployment.

- Supports full offline, private, or self-hosted usage.

9. Streaming + function calling support

- Real-time interactions.

- Strong for interactive agents, coding assistants, and UI-driven workflows.

Gemini 1.5 Pro

1. Breakthrough long-context window up to 1,000,000 tokens

- Can process 1 hour of video, 11 hours of audio, 700k+ words, or 100k+ lines of code in a single prompt.

- Supports advanced retrieval, reasoning, summarization, and cross-document tasks.

- Achieves 99% retrieval accuracy on 1M-token Needle-In-A-Haystack tests.

2. Strong multimodal reasoning across video, audio, images, and text

- Can analyze long videos (e.g., full silent films), track events, infer causality, and identify small details.

- Handles large complex documents like manuals, transcripts, and books.

3. High-performance reasoning and problem solving

- Comparable to Gemini 1.0 Ultra across many benchmarks.

- Excels at code reasoning, multi-step explanations, and large-scale codebase analysis.

4. Advanced code understanding and generation

- Performs problem-solving on codebases exceeding 100,000 lines.

- Capable of cross-file reasoning, debugging guidance, API comprehension, and generating structured code improvements.

5. Efficient Mixture-of-Experts (MoE) architecture

- Activates only relevant expert pathways per input.

- Enables faster training, lower latency, and more efficient serving.

- Dramatically improves scalability and inference speed.

6. Exceptional in-context learning capabilities

- Learns new tasks directly from long prompts without fine-tuning.

- Demonstrated by learning to translate a low-resource language (Kalamang) from a grammar manual.

7. High-fidelity multimodal understanding

- Reads, analyzes, and reasons about long PDFs, code repositories, images, and videos together.

- Enables new classes of applications: legal analysis, scientific review, codebase audits, long-form content generation, etc.

8. Safety and reliability first

- Undergoes extensive ethics, safety testing, and red-teaming.

- Improved representational safety and reduced hallucinations compared to previous generations.

9. Available for developers and enterprises

- Accessible via AI Studio and Vertex AI.

- Supports future pricing tiers for expanded context windows.

- Designed for real enterprise-scale workloads.

10. Widely capable mid-size model

- Positioned between Gemini Pro and Gemini Ultra generations.

- Well-balanced: reasoning, multimodality, long-context, and speed.

Turn your AI ideas into AI products with the right AI model

Appaca is the complete platform for building AI agents, automations, and customer-facing interfaces. No coding required.

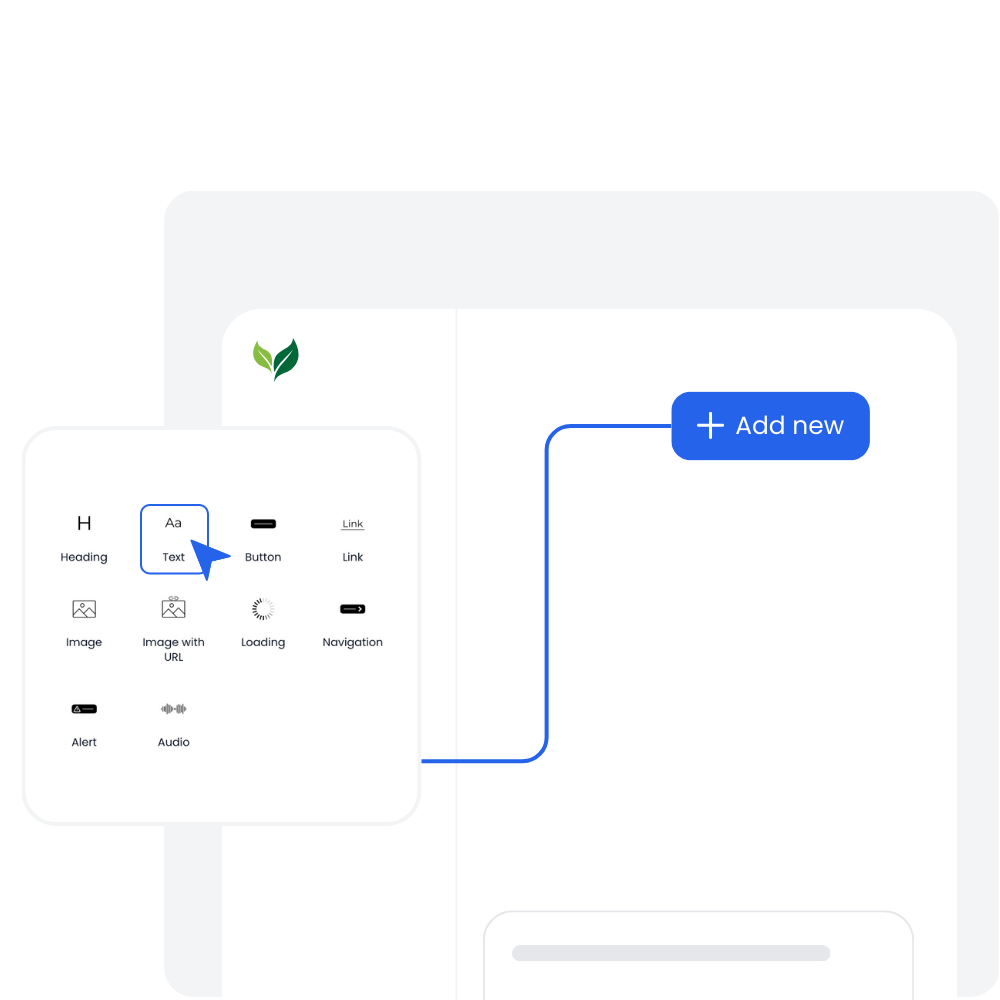

Customer-facing Interface

Create and style user interfaces for your AI agents and tools easily according to your brand.

Multimodel LLMs

Create, manage, and deploy custom AI models for text, image, and audio - trained on your own knowledge base.

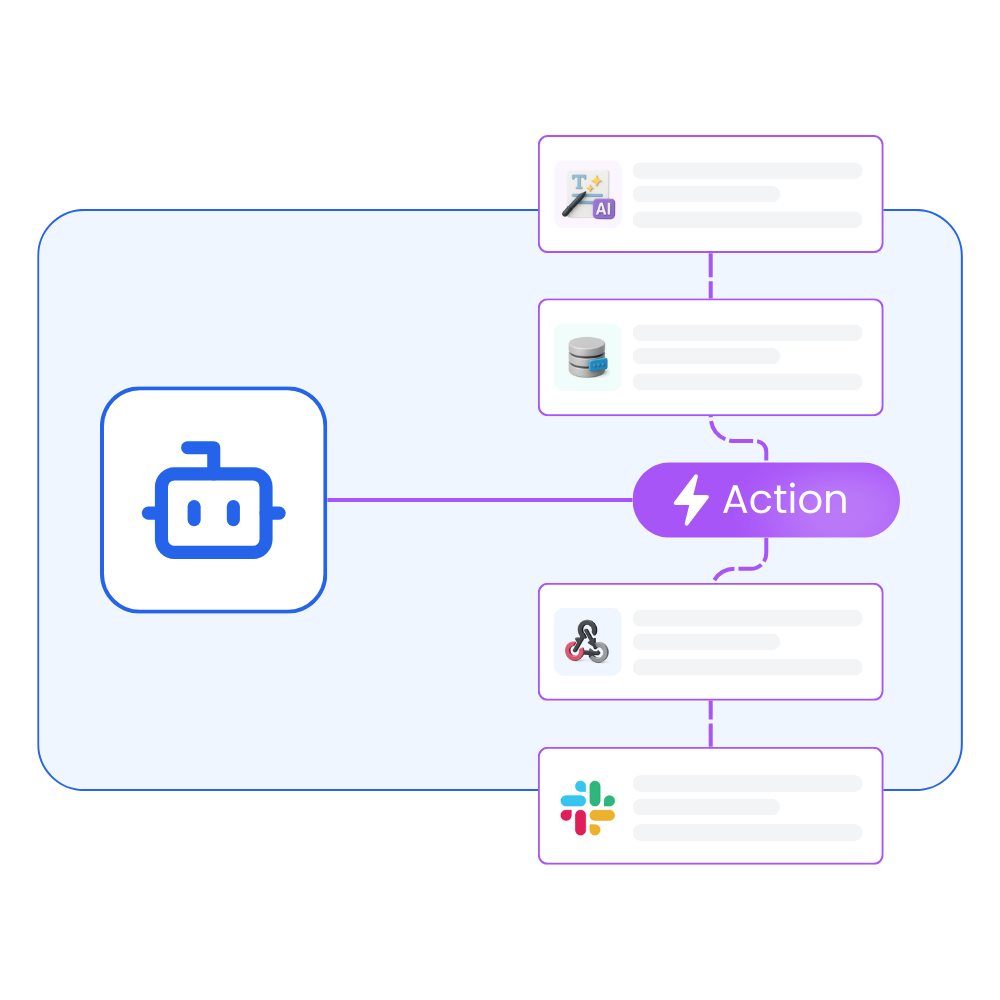

Agentic workflows and integrations

Create a workflow for your AI agents and tools to perform tasks and integrations with third-party services.

Trusted by incredible people at

All you need to launch and sell your AI products with the right AI model

Appaca provides out-of-the-box solutions your AI apps need.

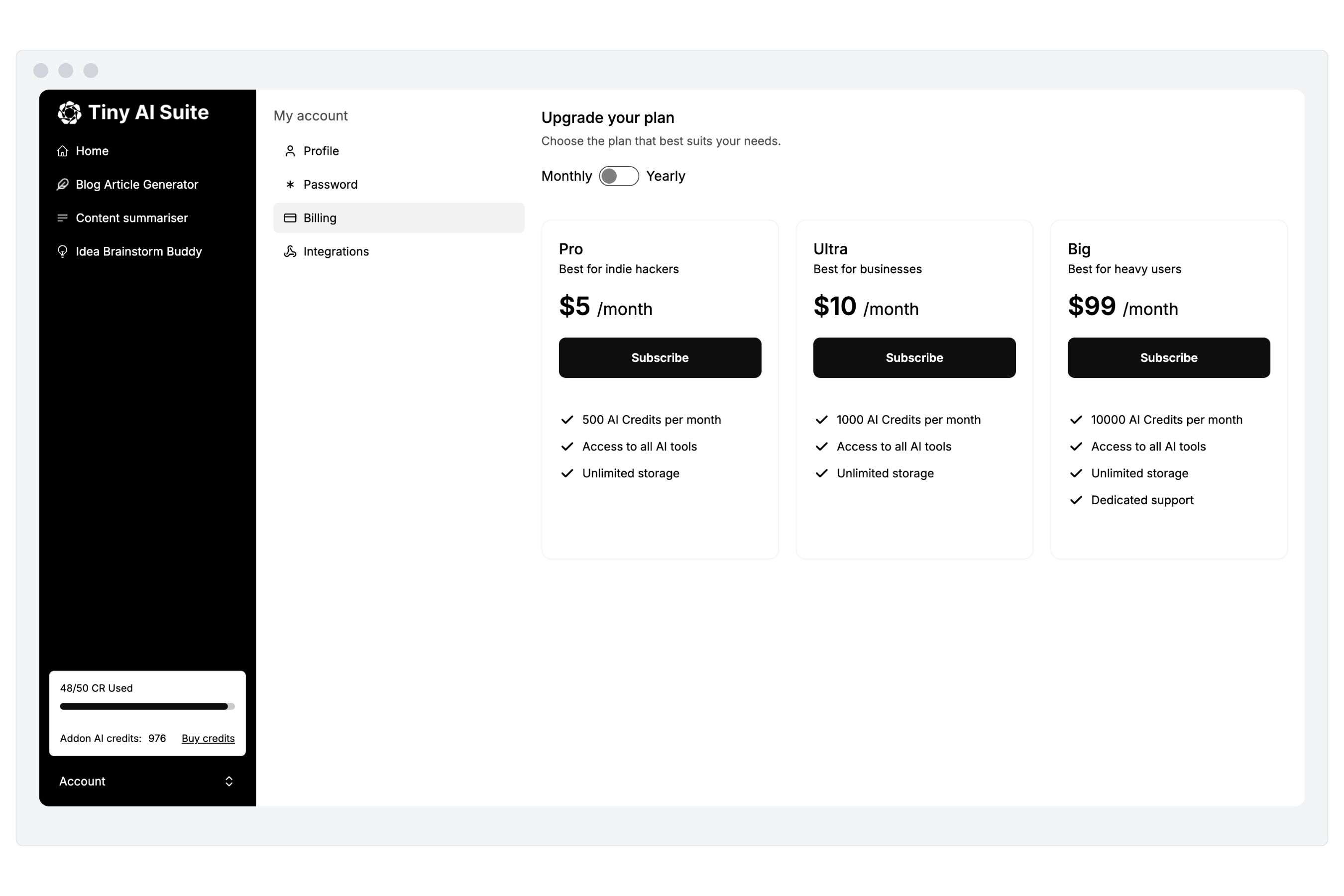

Monetize your AI

Sell your AI agents and tools as a complete product with subscription and AI credits billing. Generate revenue for your busienss.

“I've built with various AI tools and have found Appaca to be the most efficient and user-friendly solution.”

Cheyanne Carter

Founder & CEO, Edubuddy

Put your AI idea in front of your customers today

Use Appaca to build and launch your AI products in minutes.