GPT-OSS 20B vs Gemini 1.0 Pro

Compare GPT-OSS 20B and Gemini 1.0 Pro. Find out which one is better for your use case.

Model Comparison

| Feature | GPT-OSS 20B | Gemini 1.0 Pro |

|---|---|---|

| Provider | OpenAI | |

| Model Type | text | text |

| Context Window | 128,000 tokens | 128,000 tokens |

| Input Cost | $0.00 / 1M tokens | $0.50 / 1M tokens |

| Output Cost | $0.00 / 1M tokens | $1.50 / 1M tokens |

Strengths & Best Use Cases

GPT-OSS 20B

- Open-weight / Apache 2.0 licensed: you can use, modify, and deploy freely (commercially & academically) under permissive terms.

- Large model size (≈ 21B parameters) with Mixture-of-Experts (MoE) architecture: only ~3.6B parameters active per token, yielding efficient inference. :contentReference[oaicite:1]{index=1}

- Very long context window support: up to ~128 K tokens (or ~131 K tokens per some sources) enabling in-depth reasoning, long documents, or multi-turn context. :contentReference[oaicite:2]{index=2}

- Adjustable reasoning effort: you can trade latency vs quality by tuning “reasoning effort” levels. :contentReference[oaicite:3]{index=3}

- Efficient hardware requirements (for its class): designed to run on a single 16 GB-class GPU or optimized local deployments for lower latency applications. :contentReference[oaicite:4]{index=4}

- Strong for tasks such as reasoning, tool-use, structured output, chain-of-thought debugging: because the model is open and you can inspect its chain of thought. :contentReference[oaicite:5]{index=5}

- Flexibility: since weights are available, you can self-host, fine-tune, or deploy offline, giving more control than closed API models. :contentReference[oaicite:6]{index=6}

Gemini 1.0 Pro

1. Strong all-purpose performance

- Designed as Google's balanced middle-tier model.

- Handles a wide range of tasks: reasoning, writing, coding, and problem-solving.

2. Natively multimodal understanding

- Trained from the ground up on text, images, audio, and video.

- More consistent multimodal reasoning than stitched-together architectures.

3. Great cost-to-capability ratio

- Offers much of Gemini Ultra's reasoning quality at a fraction of the cost.

- Strong default choice for large-scale production workloads.

4. Reliable reasoning and factual performance

- Performs well on benchmarks like MMLU, MMMU, and code reasoning.

- Handles long-form analysis, multi-step reasoning, and structured problem solving.

5. Advanced coding capabilities

- Supports major languages such as Python, Java, C++, Go.

- Generates, edits, debugs, and explains code with high accuracy.

- Powers advanced coding systems like AlphaCode 2.

6. Efficient and scalable

- Optimized for Google TPUs for lower latency and faster inference.

- Suitable for batch workloads, agents, and complex multi-step pipelines.

7. Strong multimodal reasoning

- Understands math, physics, and scientific diagrams.

- Handles mixed data inputs (charts + text, screenshots + instructions, etc.).

8. Enterprise-ready reliability

- Available through Google AI Studio and Vertex AI.

- Benefits from enterprise-grade governance, safety, privacy, and compliance.

Turn your AI ideas into AI products with the right AI model

Appaca is the complete platform for building AI agents, automations, and customer-facing interfaces. No coding required.

Customer-facing Interface

Create and style user interfaces for your AI agents and tools easily according to your brand.

Multimodel LLMs

Create, manage, and deploy custom AI models for text, image, and audio - trained on your own knowledge base.

Agentic workflows and integrations

Create a workflow for your AI agents and tools to perform tasks and integrations with third-party services.

Trusted by incredible people at

All you need to launch and sell your AI products with the right AI model

Appaca provides out-of-the-box solutions your AI apps need.

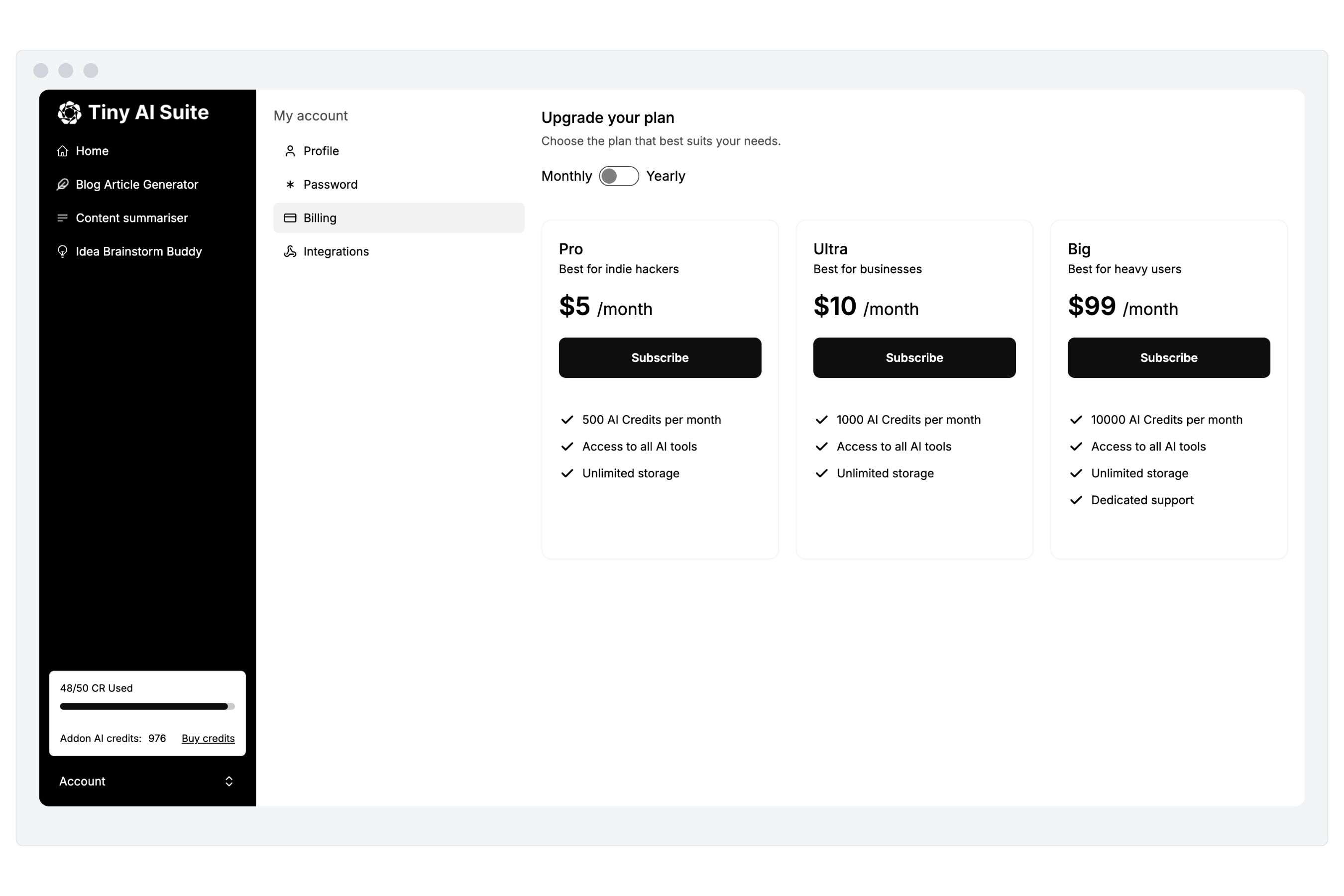

Monetize your AI

Sell your AI agents and tools as a complete product with subscription and AI credits billing. Generate revenue for your busienss.

“I've built with various AI tools and have found Appaca to be the most efficient and user-friendly solution.”

Cheyanne Carter

Founder & CEO, Edubuddy

Put your AI idea in front of your customers today

Use Appaca to build and launch your AI products in minutes.