o3 Mini vs Gemini 1.0 Pro

Compare o3 Mini and Gemini 1.0 Pro. Find out which one is better for your use case.

Pricing Comparison

o3 Mini Pricing

Gemini 1.0 Pro Pricing

Specifications Comparison

o3 Mini Specs

Gemini 1.0 Pro Specs

What are these models good for?

o3 Mini

Outperforms o3-mini, better visual/math/coding, higher usage limits, integrated agentic tool use.

Gemini 1.0 Pro

Gemini 1.0 Pro is a reliable and well-rounded model that balances performance and cost. It's suitable for a variety of tasks, including content generation, brainstorming, and editing. It's a practical choice for developing applications that require good quality at a reasonable price.

How to build apps powered by these models on Appaca?

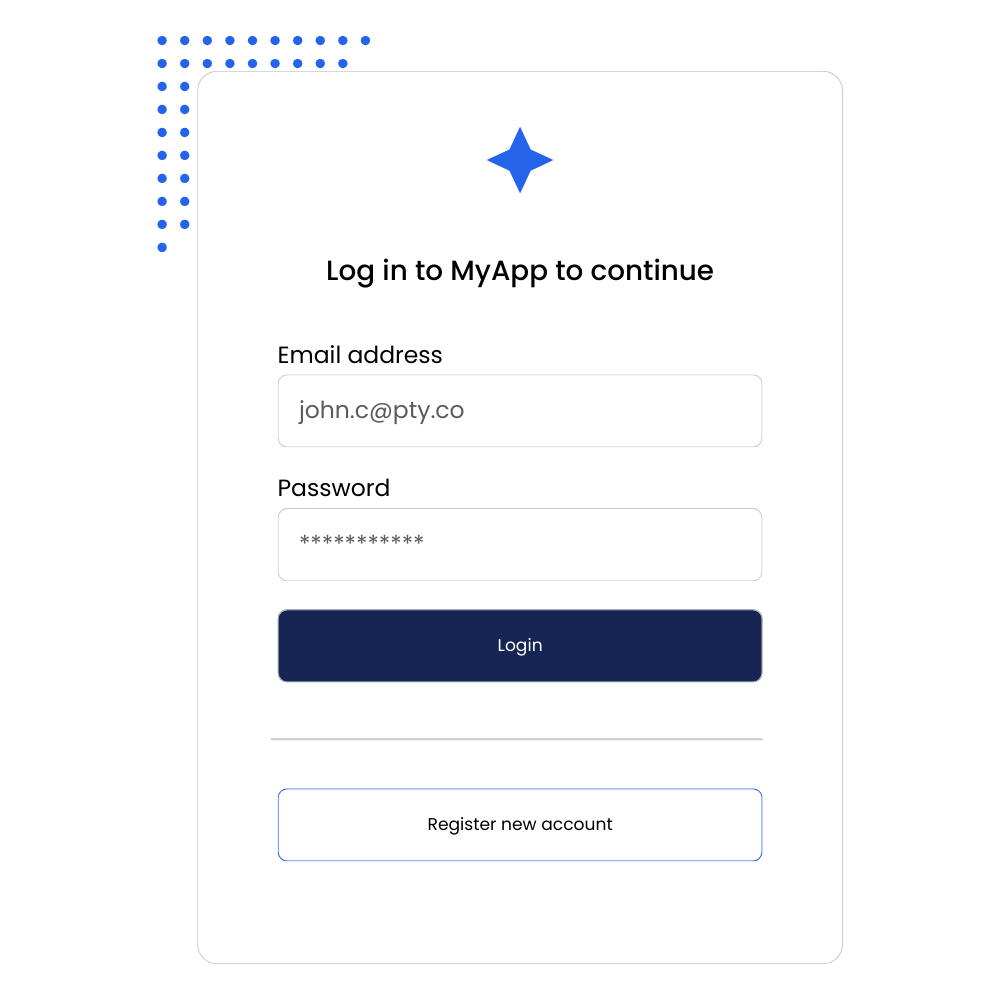

- Login or create an account: Start by signing up or logging in to your Appaca account.

- Describe your app idea: Simply describe what you want to build, and the Appaca AI agent will generate the first draft of your application.

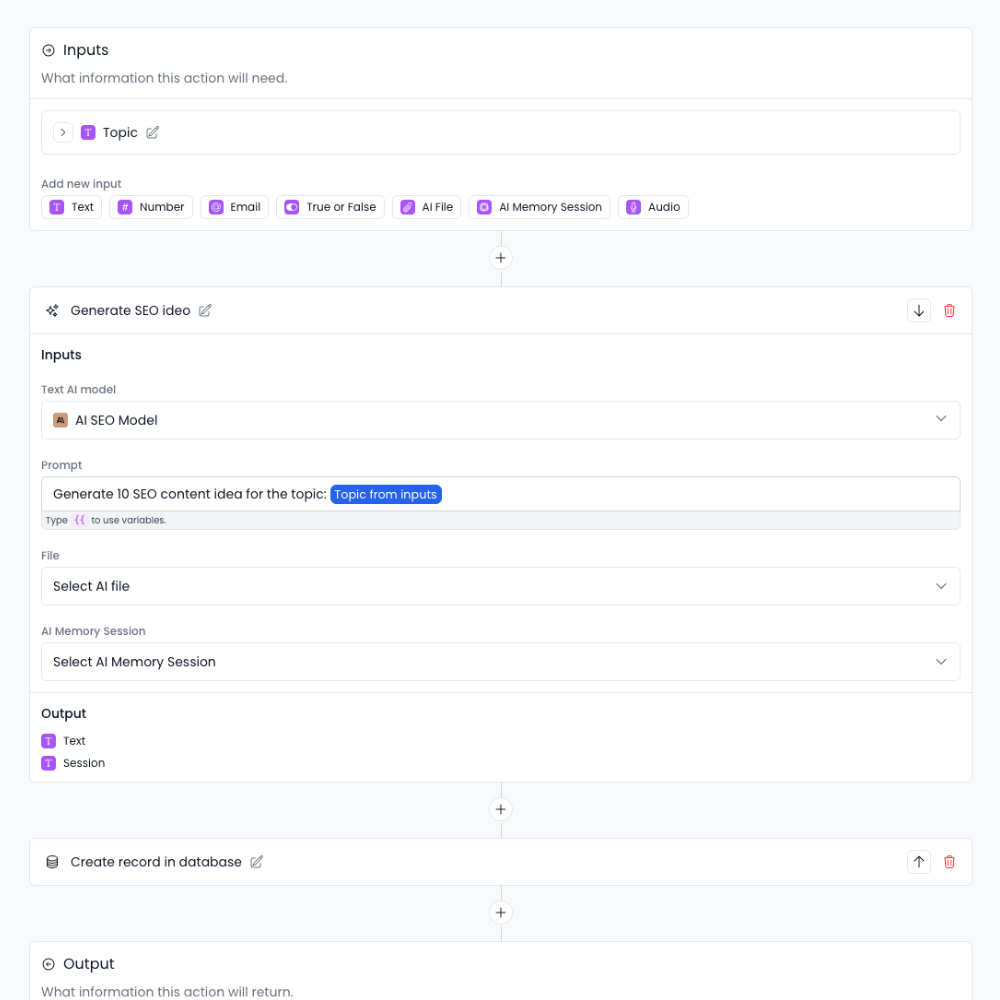

- Configure the AI Model: Navigate to the AI Studio within your project. You can create a new AI feature or update an existing one to use either the o3 Mini or Gemini 1.0 Pro model.

- Build Agentic Workflows: Create custom backend actions and user interfaces to build powerful, agentic workflows tailored to your app's needs.

- Set up Monetization: Easily configure pricing plans, subscriptions, or credit-based systems to monetize your AI application.

- Launch and Grow: Deploy your app with a single click and start generating revenue.

Trusted by incredible people at

Build AI Apps in Minutes

Create a full-stack agentic application for your businesses. Ship AI solutions for your customers with branded user facing interface.

Create custom AI models

Power your AI apps with popular Large Language Models (LLMs) like OpenAI's GPT-4o, Claude, or Google's Gemini, etc.

Build agentic workflows

Create backend workflows for your AI apps. Power with AI models. Integrate thrid-party tools.

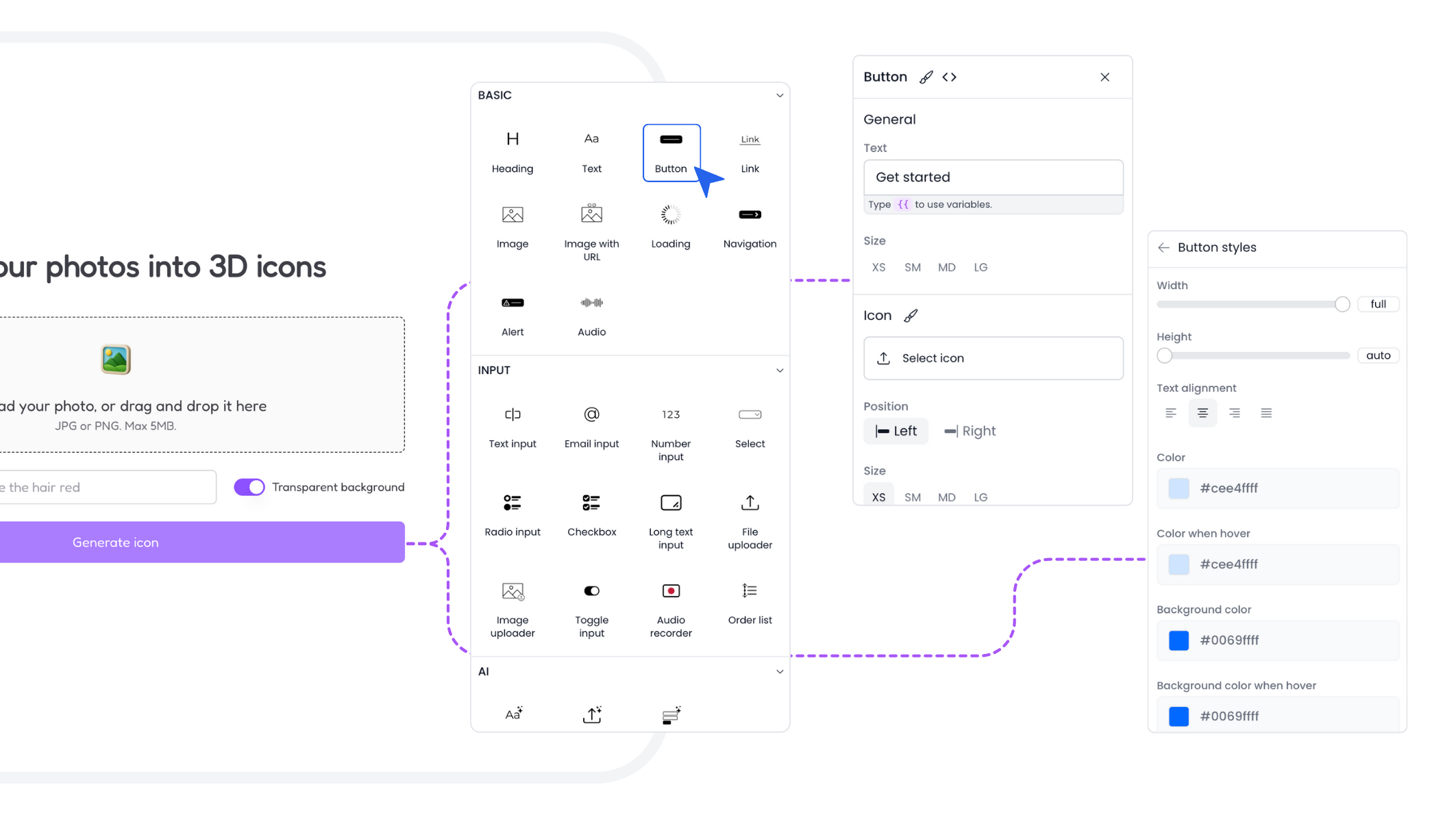

Create user interfaces for your AI apps

Use a variety of pre-built UI components without having to code. Customise the look and feel of your AI app to match your brand.

Ready-to-ship Today

All you need to make your AI business work on one platform. Authenticate your users and monetize your AI products.

Authenticate

Pre-built login and registration pages for your users. It comes with email workflows out of the box.

Manage Users

See your user details in users database. The app comes with an account page for your users to manage their account.

Store Data

Built-in database to store data in your AI apps. Easily manage your app data without hassle.

Monetize

Built-in Stripe integration for your AI apps. Charge your customers subscription and AI credits.

Create email templates and send them to your users. Securely connect your email provider.

Custom code

Go beyond with custom scripting for agentic workflow or custom styling for your user interface.

“I've built with various AI tools and have found Appaca to be the most efficient and user-friendly solution.”

Cheyanne Carter

Founder & CEO, Edubuddy

Frequently Asked Questions

We are here to help!